The Dashboard Made Them Blind

Why workflow-level decomposition is the difference between AI that transforms and AI that quietly degrades

Most of what companies call an AI strategy is actually just a shopping list. Tools to evaluate, pilots to run, use cases to ‘explore.’ Which is fine, as far as it goes. It just does not get you very far. Most strategies quietly die in the gap between “we should use AI for asset management” and knowing what to build, where the human remains vital, and how the two combine to produce something genuinely better. This piece tries to close that gap.

EXECUTIVE SUMMARY

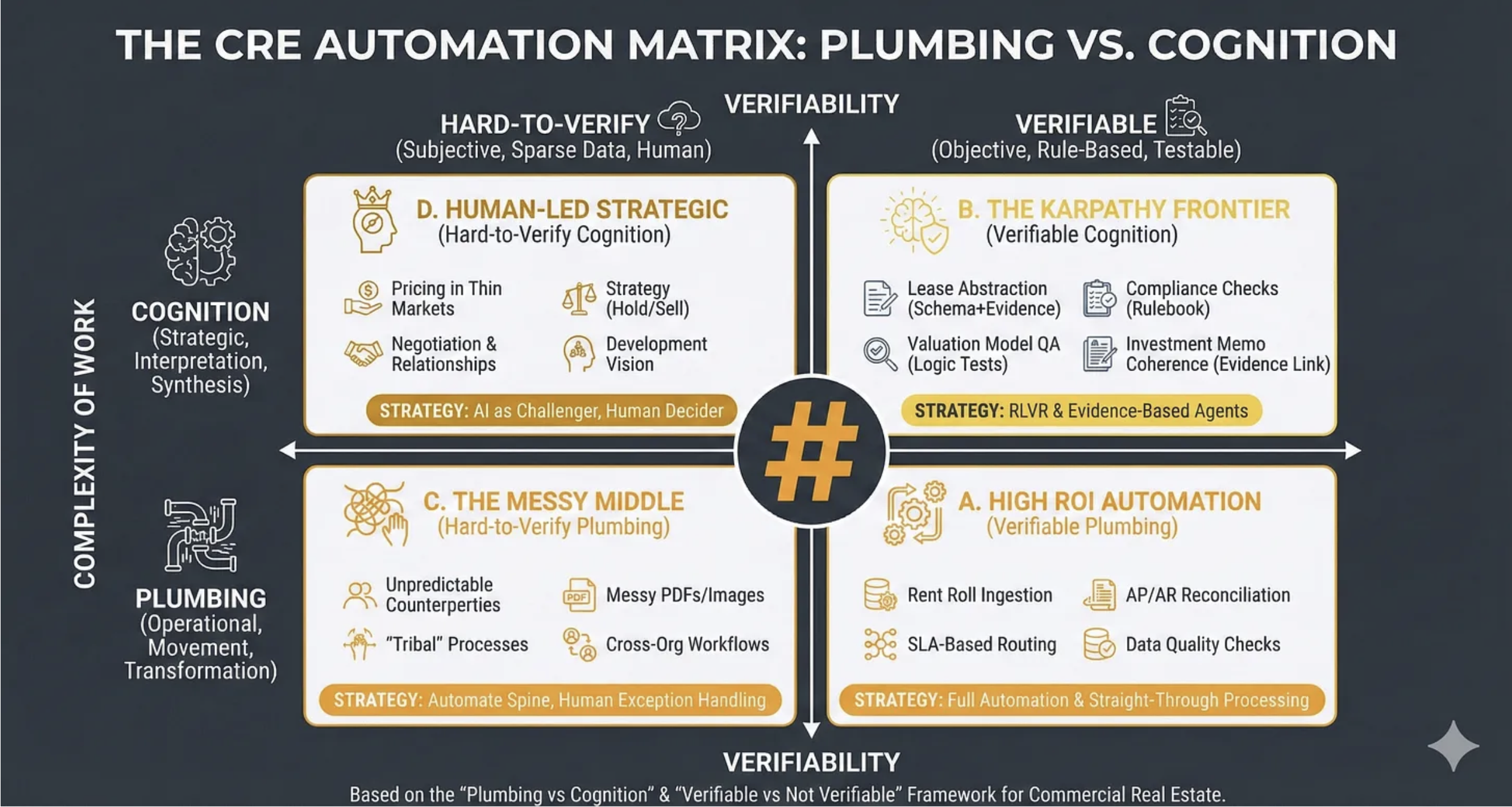

A credible AI roadmap starts by decomposing workflows to the task level. Each task then needs to be assessed through four questions: what constraints AI removes, what new ones it introduces, where value emerges across three horizons, and how the work should be redesigned so human and machine compound rather than compete. This piece applies that process to a single workflow: threat identification in an office REIT’s asset management cycle. The findings challenge assumptions: the same task can sit in different places on the CRE Automation Matrix depending on jurisdiction and data quality. Automating the measurable can degrade your capability in the unmeasurable. And the genuinely transformative horizon (H3) isn’t a better dashboard: it’s a different operating model for the function itself.

_______________

This essay pulls together several frameworks I’ve unpacked in recent newsletters - particularly RIRA, the CRE Automation Matrix, and the Prompting Framework - and applies them in detail to a single real estate workflow.

_______________

THE WORKFLOW

Over the past few months I’ve introduced a framework stack for creating value with AI in CRE: RIRA for strategy, the CRE Automation Matrix for analysis, and the Prompting Framework for execution. Together they answer three questions: how are we creating value, what kind of work is this, and how do we get it done?

This piece takes those frameworks off the shelf and applies them to a real workflow at the level of granularity a roadmap actually requires. The aim is not to be cheaper or faster, though you will be both. The aim is to be demonstrably, verifiably better: to produce a quality of output the traditional operating model cannot match. That requires surgical decomposition - seeing exactly where to push hard on the AI and where to optimise the human. Neither happens without genuine system redesign.

Take a hypothetical mid-cap European office REIT. 45 assets across the UK, Germany, and the Netherlands. Property management outsourced. Asset management team of twelve.

The asset manager’s recurring job is to answer four questions continuously:

How is income performing versus plan?

What is threatening that income?

What interventions will protect or grow it?

What needs to happen next?

We’re going to take the second, threat identification, and apply the RIRA process. I’ve chosen this because it’s where the Automation Matrix classification is most contested, and where getting the human-machine boundary wrong creates real financial risk rather than just inefficiency.

Here’s what every asset manager knows but rarely says out loud: they are doing seven fundamentally different jobs simultaneously when they identify threats to income. And what RIRA reveals, when you decompose properly, is that those seven jobs need seven different human-machine configurations.

SEVEN JOBS, SEVEN DIFFERENT PROBLEMS

1. Lease event surveillance. 300+ breaks, expiries, rent reviews, and options in a rolling 24-month window. Knowing not just when they are, but which ones bite: a small lease that’s individually immaterial but leaves an entire floor vacant.

2. Tenant financial monitoring. Payment patterns, public financials, sector stress, balance sheet signals. Ranges from the trivially observable to the analytically complex.

3. Relationship intelligence. Tenant conversations, building walkthroughs, broker networks, the PM’s building manager mentioning something over coffee. None of this is in any database. For experienced asset managers, it is frequently the earliest threat signal.

4. Market threat assessment. Supply pipeline, rental direction, demand shifts, regulatory risk. The data exists; the interpretation of what it means for this specific building does not.

5. Physical and regulatory risk. EPC deadlines are rules-based and trackable. Building condition data is fragmented across PDFs, BMS systems, and within property managers’ heads.

6. Valuation trajectory. Forming a view on whether the external valuer will write an asset down. Which requires understanding not just the comparables but how the specific valuer thinks.

7. Synthesis. Pulling 1–6 together, reading the interactions between them, deciding what to escalate. This is where the experienced asset manager earns their salary.

The instinct, the one most AI strategies follow, is to scan this list and classify: automate surveillance, partially automate credit monitoring, leave valuation judgement alone. That classification would be right at such a crude level that it’s dangerous.

RELEASE: WHAT CHANGES AND WHAT GETS HARDER

Before deciding what to automate, you need to understand both the constraints AI removes and the ones it introduces.

RIRA starts with Release: map the constraints AI removes and the new ones it introduces. This is where the strategic picture forms.

What opens up:

The surveillance bandwidth constraint disappears. An asset manager with five multi-let buildings triages by rent quantum. Big tenants get attention; small ones get reviewed when something goes wrong. AI removes this: every event monitored continuously, regardless of size. This matters because the damaging event isn’t always the biggest tenant’s break. Sometimes it’s three small tenants all exercising in the same quarter, triggered by the same market dynamic, that nobody spotted as a pattern because each was individually immaterial.

Evidence assembly collapses from hours to minutes. Portfolio-level pattern recognition becomes possible for the first time: correlated threats across assets that no individual has the bandwidth to see. Multi-scenario analysis per asset per quarter becomes routine instead of exceptional.

What gets harder:

The false negative problem. This deserves to be treated as a first-order design constraint, not a risk to manage after the fact. Automated surveillance monitors what it’s configured to monitor. New threat types - a planning authority changing designations, a shift in corporate occupier strategy, a regulatory change not yet enacted - won’t trigger alerts because nobody anticipated them when the rules were written.

Consider what has actually destroyed significant value in institutional office portfolios recently: COVID, the energy price spike, the acceleration of distributed working, regulatory tightening on Energy Star or EPCs. A monitoring system designed the year before any of these would not have caught them. A good asset manager, reading the market and talking to tenants, might have.

Then there’s automation-induced complacency. Almost every technology tool introduced into asset management over the past 15 years has exhibited the same pattern: the tool replaces attention rather than supplementing it, because people are busy and the tool gives them permission to stop doing the effortful work. Automated rent tracking was introduced; people stopped walking floors. Dashboards appeared; people stopped reading detailed PM reports.

The uncomfortable implication of this is that the better automated surveillance works for known threats, the more it can degrade your capability for unknown ones. Not fixable by better AI. This is a human behaviour problem that the Redesign phase of RIRA has to address directly.

Add the verification gap - who checks the AI-assembled threat picture when the asset manager’s implicit quality control came from assembling it themselves? And the PM data governance question - were those outsourced PM contracts even written to contemplate automated analysis? With second-order consequences like these, the constraints-introduced side of the ledger is at least as consequential as the constraints-removed side.

THE AUTOMATION MATRIX: WHERE CLASSIFICATION GETS INTERESTING

The mistake is to treat a workflow as one kind of work. It rarely is.

With constraints mapped, you classify each task on the CRE Automation Matrix: what kind of work is this, and how verifiable is it? The generic classification - lease surveillance is Quadrant A, tenant credit is Quadrant B, valuation is Quadrant D - looks clean.

But it’s wrong in three ways that matter.

The same task sits in a different quadrant depending on jurisdiction. Lease event surveillance for well-abstracted UK leases with verified data is Quadrant A: rules-based, testable, automatable. But German commercial leases operate under BGB provisions with fundamentally different mechanics, from how break rights and rent adjustments work to how formal documentation requirements apply. Even after the 2025 reform that relaxed the old Schriftform written-form standard, new complexities have emerged around documentation and the legal status of informal agreements. Dutch office leases under Article 7:230a of the Civil Code have their own distinct framework again: different renewal and termination mechanics, a critical classification question about which statutory regime applies, and materially different consequences depending on the answer.

One workflow. Three markets. Three different quadrant positions. Build a system for the UK classification and deploy it uniformly: reliable for English leases, potentially dangerous elsewhere. The point generalises beyond these three jurisdictions: wherever you operate across borders, the automation profile is jurisdiction-specific. Local knowledge isn’t a nice-to-have. It’s a structural requirement.

The same task shifts quadrant depending on data quality. Tenant credit analysis is Quadrant B where data is clean: public financials, credit ratings, structured sector data. For privately held Continental European tenants where public data is limited or delayed, the same analysis moves into hard-to-verify territory. The evidence base isn’t there. You’re relying on relationship intelligence, not structured data.

Automating the measurable degrades your capability in the unmeasurable. This is the finding that matters most. Build a tenant credit dashboard. It monitors payment patterns and public financials well. It generates confidence. And because the dashboard is ‘handling’ tenant risk, the pressure on asset managers to maintain their tenant relationships - the conversations, the site visits, the reading of signals - quietly diminishes. People are busy. The dashboard gives them permission to stop.

But the tenant about to exercise a break doesn’t always show up in the financials. They show up in how they’ve stopped investing in their fit-out, the half-empty floors, the facilities manager who mentions in passing that the company is looking at options. That intelligence comes from being present. If you’re not present because the system is ‘handling it,’ you’ve automated yourself into a blind spot.

THREE HORIZONS: FROM FASTER TO FUNDAMENTALLY DIFFERENT

Once the task types are clear, the question becomes not just what gets cheaper, but what becomes possible.

H1: the efficiency gains. Automated calendars, payment dashboards, market data assembly, compliance trackers. Worth doing immediately. Table stakes within two years. Same picture, faster. Every REIT will build these.

H2: the capability upgrade. Evidence-linked threat assessments: every claim traced to source - the lease clause, the comparables with adjustment logic, the payment history, the sector outlook. The asset manager doesn’t assemble the picture; they evaluate it. A fundamentally different use of their time.

Portfolio-level pattern detection: a capability that doesn’t exist today in any form, even manual. The system surfaces correlated threats that no individual can see. The portfolio committee conversation shifts from “tell me about your buildings” to “here are the portfolio-level patterns - which do we act on?”

These are frontier capabilities - cognitive work made verifiable through evidence engineering. Hard to build. Hard to replicate. Where defensible competitive advantage lives.

H3: the transformation. Now apply the H3 Provocation Framework - the questions designed to push past efficiency and capability into genuine structural change.

What becomes free, and whose business breaks? The binding constraint in threat identification is the asset manager’s cognitive bandwidth: deep monitoring of 3–5 assets, not more. If AI removes the surveillance and evidence assembly constraints, a senior AM can maybe oversee 8–10. The relationship layer sets the ceiling - you can’t maintain deep tenant intelligence across fifteen buildings - but it rises meaningfully. Whose business model depends on the current bandwidth constraint? Every outsourced AM provider whose fee assumes the current ratio of human attention per asset.

What are you actually selling? Asset management fees bundle information assembly, analysis, market knowledge, relationships, judgement, and accountability. AI commoditises the first two. The premium concentrates on relationships, judgement, and accountability; perhaps 40% of where time currently goes. If clients see that 60% of the bundle is automated, the fee holds only if the remaining 40% is demonstrably better.

If someone built an asset management platform from scratch today, would they build what you’ve got? They’d build continuous surveillance and evidence assembly as infrastructure, hire a small team of very senior people whose job is relationships, judgement, and accountability, and pitch: “Better asset management at lower cost, because our platform handles what your AMs do manually, and our people focus on what requires human judgement.”

After this shift, whose signature still matters? The asset manager’s threat assessment currently carries authority because they assembled it. In the new model, their signature means: “I validated the AI’s assessment, applied my contextual knowledge, and I endorse this view.” Still valuable. But a different job. The skills shift from production to evaluation - and evaluation is a more senior skill. The junior analyst who helped assemble the picture may not have a role. The senior AM who can challenge an AI-generated assessment is more valuable than ever.

Who has this problem right now, and what are they paying to solve it badly? Every investor surprised by a write-down. Every portfolio committee that asked “did we know about this?” after a tenant default. Every fund manager who lost a mandate because a competitor seemed more on top of their portfolio. They’re all paying for the absence of continuous, evidence-linked threat intelligence. They’re just paying in lost value and lost mandates rather than invoices.

Apply the test: does it make the current process obsolete? If continuous threat intelligence exists, the quarterly “present your buildings” review becomes unnecessary. The one-person-owns-five-buildings-end-to-end structure becomes a legacy. That’s not a faster taxi. It’s a different way of getting around.

REDESIGN: HUMAN + MACHINE, BY DESIGN

RIRA’s Redesign phase turns the strategic picture into operational architecture. The key insight is blunt: the current model asks one person to do everything. They spend 60% of their time on data assembly and routine monitoring, 40% on interpretation, judgement, and relationships. The plumbing subsidises the cognition. That’s backwards.

The redesign separates four layers:

A continuous surveillance layer: lease events, payment patterns, market data, comparables, regulatory deadlines. Jurisdiction-specific rules. Data confidence indicators showing what’s verified and what hasn’t been. Always on.

An evidence assembly layer triggered by events: structured, cited assessments where every claim traces to source.

A portfolio pattern layer - genuinely new - surfacing correlations that no individual can see.

A human judgement layer with two explicitly protected activities. First: evaluate, challenge, and override the automated assessments using contextual knowledge the system doesn’t have. Second: maintain and deepen the relationship intelligence that detects the threats machines cannot see. Building visits, tenant conversations, PM relationships, market presence. This is not leftover work after automation. It is formally specified, time-protected, and treated as core capability - because the Release analysis told us that automation of known-threat surveillance will degrade unknown-threat detection if the relationship layer isn’t deliberately preserved.

This aligns with what recent research on human–AI collaboration keeps finding: the gains come not from replacing humans wholesale, but from designing workflows where pattern meets exception, scale meets judgement, analysis meets intuition, and creativity meets structure. Real synergy - where 2+2 genuinely makes 5 - is a workflow design outcome, not a purchasing decision.

The result: the senior asset manager spends 80% of their time on work only they can do, instead of 40%. Their judgement improves because they’re not cognitively depleted by data assembly. The portfolio committee receives evidence-linked, auditable assessments. Cross-portfolio patterns are visible for the first time. And the human intelligence layer is stronger, not weaker, because it’s valued rather than incidental.

That is what human + machine looks like when it’s designed rather than accidental.

WHAT TO DO NEXT

The same process applies whether your workflow is leasing, fund reporting, valuation support, debt management, development monitoring, or facilities operations. The specifics change. The method doesn’t.

Take your most important workflow and ask:

What are the discrete tasks? Decompose until each is a single kind of work.

What constraints does AI remove? Bandwidth, evidence assembly, pattern recognition, analytical depth…

What new constraints does it introduce? False negatives, complacency, verification gaps, data governance… Design requirements, not afterthoughts.

How does classification change by jurisdiction and data quality? If it doesn’t change, you haven’t looked closely enough.

Where should the machine run continuously?

What human capability must be protected, not displaced? Time-allocated. Measured. Accountable.

Where does the combination produce something neither could achieve alone?

That’s your roadmap. Everything else is decoration.