THE BLOG

CRE AI Is a Layer Cake

Why most CRE AI programmes are building the wrong layer first - and what the right architecture actually looks like in 2026.

There is a view that keeps surfacing in CRE: in boardrooms, at conferences, and in the LinkedIn comments under my posts. It says the useful AI work in property is the analytical kind. Forecasting rents. Predicting prices. Scoring risk. Optimising portfolios. The kind of work that takes a data science team, an eighteen-month build and a great deal of patience. That view is not wrong exactly. It is just, for most firms and most people, extraordinarily beside the point. And its practical effect is to make the industry wait for a perfect model, a clean dataset, a specialist team, while the tools that could be improving your work today sit unused.

Executive summary

Modern AI in CRE is a layered architecture, not a single system. Five layers: data foundation, reasoning substrate, grounded retrieval, workflow automation, and bespoke analytical AI. Value compounds from the bottom up, and the largest gains for most firms sit in the middle, in the layers most often dismissed as ‘just chatbots’. The mistake the industry keeps making is to start at the top, ignore the middle, and then wonder why two years of programme spend has produced nothing useful. This piece walks through each layer: what it is, what good looks like, and how to sequence the stack so that value shows up in weeks rather than years.

THE OVERVALUED LAYER

Go to almost any CRE AI discussion and the conversation drifts, within minutes, towards forecasting, scoring and prediction. This is Layer 4 of the modern stack. It is real. It is occasionally necessary. It is also the smallest, most selective, and least commonly needed layer for the vast majority of firms.

Most people in commercial real estate do not spend their days building predictive models. They spend their days doing something else entirely.

WHAT YOU ACTUALLY DO ALL DAY

Look at your own diary this week. Your time has been going into:

reading documents

extracting facts

comparing clauses

drafting memos

reviewing evidence

checking compliance

finding precedents

assembling reports

moving information from one place to another

That is your working life. It is not Layer 4 work. It is Layer 1 and Layer 2 work. It is exactly where modern AI systems are already excellent. And it is exactly where most of the value is currently being left on the table.

‘JUST CHATBOTS’ IS THE EXPENSIVE MISTAKE

A vocal faction in CRE still dismisses LLMs as ‘just chatbots’. The phrase gets repeated at conferences, on analyst calls, and in the mouths of executives who have never seriously tried to use one. That dismissal is the single most expensive AI mistake currently being made in our industry.

It mistakes the interface for the system. A conversational prompt is how you talk to Layer 1. It is not what Layer 1 is. What Layer 1 is, when deployed properly, is a reasoning engine with access to the firm’s templates, the firm’s house style, and the firm’s codified approach to work like covenant analysis, lease abstraction and tenant financial assessment. Set inside a well-configured Project, with the relevant documents in context, it drafts. It compares. It extracts. It reviews. It challenges. It does in ninety minutes what a competent analyst would take three days to do on the current deal, and the analyst can see which documents in the Project it drew on. And that is Layer 1 alone, before you connect it to the firm’s wider archive or build an agent on top of it.

Dismissing that as ‘chatbots’ is like dismissing a modern steel mill as ‘just a furnace’. The interface is not the point.

SO WHAT IS THE POINT?

The point is that CRE AI is a layered architecture, and most of the value sits below the predictive layer that gets all the attention. Five layers. Each one compounds on the one below it. Skip the foundations and the upper layers wobble. Build the foundations properly and the upper layers become cheap to add.

And there is a second architectural shift that matters even more. A few years ago, analytical AI was the primary system, with natural language interfaces added on top. In modern architecture, that has been inverted. The reasoning layer is primary. The analytical AI is a tool the reasoning layer calls when it needs specialist computation. Claude does not forecast rental growth. It calls a forecasting model that does, interprets the output, contextualises it against other evidence, and drafts the narrative for the analyst. This is not a cosmetic difference. It changes what the system can do, what it costs to build, and where the value lives.

What follows is the architecture, layer by layer. What each is. What good looks like. Where it is genuinely needed. Where firms systematically over-invest. And how to sequence the whole thing so that you get working capability in weeks rather than years.

LAYER 0: DATA FOUNDATION (THE PREREQUISITE)

Start here, or everything above it fails.

What it is. The data hygiene, indexing and access infrastructure that makes everything else in this piece possible. It is not AI. It is the precondition for AI.

What good looks like. People across the firm can locate documents quickly. Reference systems are consistent. Deal rooms are structured. Historical records are digitised and searchable. There is a clear ownership model for data quality. Lease data is not scattered across seventeen PM systems and a shared drive called FINAL_FINAL_v2. Deal documents have consistent metadata. ESG data flows into a system rather than being re-keyed from PDFs each quarter.

What it enables. Everything. Without Layer 0, the rest of the stack either doesn’t work or produces unreliable output with no audit trail.

The reality check. For many institutional CRE firms, the honest starting position is that Layer 0 is the biggest single constraint on AI ambition. Closing the gap is tedious, unglamorous and expensive. It is also unavoidable. The firms that pretend they can skip it produce demos that impress the board and fall apart under real use. The firms that take it seriously spend the first six to twelve months of their AI programme doing the work nobody wants to do, and then their subsequent layers compound properly. This is the single largest determinant of whether an AI programme delivers real value or productivity theatre.

One important qualifier. Layer 0 is a prerequisite for firm-wide capability: the kind of institutional memory and portfolio-level grounding that Layer 2 depends on. It is not a prerequisite for individual practitioners getting on with specific pieces of work. An analyst with the documents for a specific deal in a specific Project can produce real Layer 1 value tomorrow morning, regardless of the state of the firm’s wider data estate. The waiting game that Layer 0 concerns sometimes invite is a mistake. Your firm’s data might be a mess. The documents you need for today’s work are in front of you. Start there.

And a second nuance. Layer 0 work is itself increasingly AI-assisted. LLMs are now genuinely good at extracting structured data from unstructured documents, normalising inconsistent records, and reconciling references across systems. The Layer 0 cleanup and the Layer 1 deployment can proceed in parallel, with the Layer 1 tooling accelerating the Layer 0 work. But the principle holds: you cannot build reliable firm-wide capability on top of unreliable data.

LAYER 1: THE REASONING SUBSTRATE

This is the layer most firms underestimate. It is also the layer where most of the value is.

What it is. A frontier LLM environment (Claude, ChatGPT, Gemini) deployed as the default thinking environment for your analysts, portfolio managers, and operations staff. Not a chatbot bolted onto existing workflows. The reasoning layer that sits underneath everything your people actually do.

A note on tooling. The language of Projects, Skills and configured coworkers is currently most developed in Claude, which leads the market on this architecture as of early 2026. Equivalent or near-equivalent capability is arriving rapidly across the frontier - OpenAI, Google and others - and by the end of this year the architectural pattern will be general rather than vendor-specific. The principles below apply across all frontier providers; the current naming reflects where the tooling is furthest along.

The building blocks at this layer.

Projects hold the context for a specific piece of work. A Project for an acquisition might contain the offering memorandum, the data room index, the comparable transactions, the underwriting template, and the draft IC memo. Everything the analyst is working on lives inside the Project, and every conversation with the reasoning layer is grounded in that context. Projects are the unit of work: not the individual prompt, and not the whole firm’s knowledge base, but the specific thing being done right now.

Skills are codified workflows that encode how your firm does a specific task. A lease abstraction skill knows what fields to extract, what schema to produce, what red flags to surface, and how your templates are structured. A covenant analysis skill knows your firm’s approach to tenant financial assessment. An IC memo skill knows your house style and the required structure of each section. Skills turn institutional know-how into repeatable cognitive workflows that any analyst can invoke. They are the single most underused capability in modern AI systems, and they are where most of the firm-specific value actually lives.

Configured coworkers are persistent personas set up for a specific role: a covenant analyst, a compliance reviewer, a climate risk scout, an investor relations drafter. A covenant analyst coworker is not a workflow you run: it is a colleague you ask. It carries its own instructions, reference materials and behaviours, and it can be called on by anyone in the firm who needs that kind of thinking applied to their current problem. The point of configured coworkers is to turn expertise that currently lives in one person’s head into something the whole firm can access.

What Layer 1 actually delivers. Most of the value most firms need, most of the time. This is the part people systematically under-estimate, because it sounds too simple.

An analyst working inside a well-configured Project, with access to the right skills and coworkers, can draft an IC memo in an afternoon that would previously have taken a week. A covenant review that required three days of manual reading can be compressed to ninety minutes of structured interaction. Compliance flagging becomes a background process rather than a quarterly fire drill. None of this requires custom agents. None of it requires analytical AI. None of it requires a knowledge graph. It requires Layer 0 data and a well-deployed Layer 1 substrate.

Why this matters. Layer 1 is where the compounding value lives. Every new skill, every new coworker, and every new Project template captures institutional knowledge in a form the whole firm can use tomorrow. It is also where firms that ‘get it’ start to pull away from firms that don’t. The gap between the two is already visible inside the firms running my courses. It will become obvious in the market within eighteen months.

LAYER 2: GROUNDED RETRIEVAL OVER FIRM DATA

Now the reasoning layer stops answering from its training data and starts answering from yours.

What it is. A structured retrieval layer that lets the Layer 1 reasoning substrate access the firm’s actual data with proper grounding and evidence chains. This is what RAG (retrieval-augmented generation) does when it is built properly. Your deal rooms, historical documents, lease archives and reporting systems become queryable through the reasoning substrate, with every answer traceable back to source.

Why this is separate from Layer 1. Layer 1 can work with the context you put into a Project, but a single Project cannot contain the whole firm’s history. Layer 2 extends the reasoning layer’s reach to the firm’s institutional memory, while maintaining the evidence-linking that makes the output defensible. When an analyst asks ‘have we ever underwritten a deal with a similar covenant structure?’, the answer comes from Layer 2 retrieval, with citations back to the actual historical documents.

What good looks like. Every answer produced at this layer has an evidence chain. The analyst can see which documents were retrieved, which passages were cited, and how the reasoning layer used them. Nothing is hallucinated, because everything is grounded in retrievable source material. The underlying retrieval technology (vector databases, structured extraction, semantic search) has matured to the point where building this layer is engineering work rather than research.

A note on knowledge graphs. If your firm already has a well-structured knowledge graph, that is a significant advantage at this layer. A knowledge graph captures explicit relationships between entities rather than just semantic proximity, and it can answer queries that vector search struggles with. ‘Show me all assets where the tenant covenant has weakened since acquisition and the CRREM pathway is red’ is a natural query for a knowledge graph and a hard one for pure vector search.

So: are knowledge graphs a good thing if you have them? Emphatically yes. A knowledge graph is a Layer 2 asset that should absolutely be connected into the retrieval fabric and used as the highest-quality grounding source available. The argument is not that knowledge graphs are unnecessary. It is that a firm starting from scratch today should not begin by building one. The modern sequencing is: deploy Layers 0 and 1, build grounded retrieval at Layer 2 using whatever structured data is available, and add a knowledge graph when the limits of simpler retrieval become binding. Not that long ago, a knowledge graph was the only way to get grounded cognition, because there was no reasoning engine to pair it with. In 2026, the reasoning engine exists, and the knowledge graph is one of several options for grounding it. Powerful, but no longer foundational in the way it had to be a decade ago.

LAYER 3: CUSTOM AGENTS AND WORKFLOW AUTOMATION

Now the reasoning substrate stops being something people talk to, and starts being something that runs by itself.

What it is. Task-specific automated workflows built on top of Layers 1 and 2. Where Layer 1 supports an analyst doing their work interactively, Layer 3 runs the workflow end-to-end with defined inputs, defined outputs and defined quality checks. A Layer 3 agent for quarterly rent roll reconciliation takes the source files from the PM system, checks them against the GL, flags exceptions, produces the reconciliation report, and surfaces anything that needs human attention, all without an analyst needing to drive the process.

The tooling. Claude Code is currently the leading example of a development environment for this layer. It is a terminal-based agentic environment in which the reasoning layer can execute code, call APIs, manipulate files, interact with external systems, and run multi-step workflows autonomously under human oversight. Equivalent tooling exists from other providers. The important thing is that Layer 3 work is genuinely development work. It requires thinking about task decomposition, error handling, exception routing, audit trails, and integration with source systems. This is not point-and-click, and it is where the engineering effort of an AI programme starts to become meaningful.

What Layer 3 is for. Repeatable, high-volume, rules-adjacent work where the firm currently spends meaningful human hours on tasks that follow a consistent pattern:

rent roll ingestion and reconciliation

covenant compliance checking

CRREM pathway monitoring

standardised report generation

lease abstraction at scale

due diligence document review against checklists

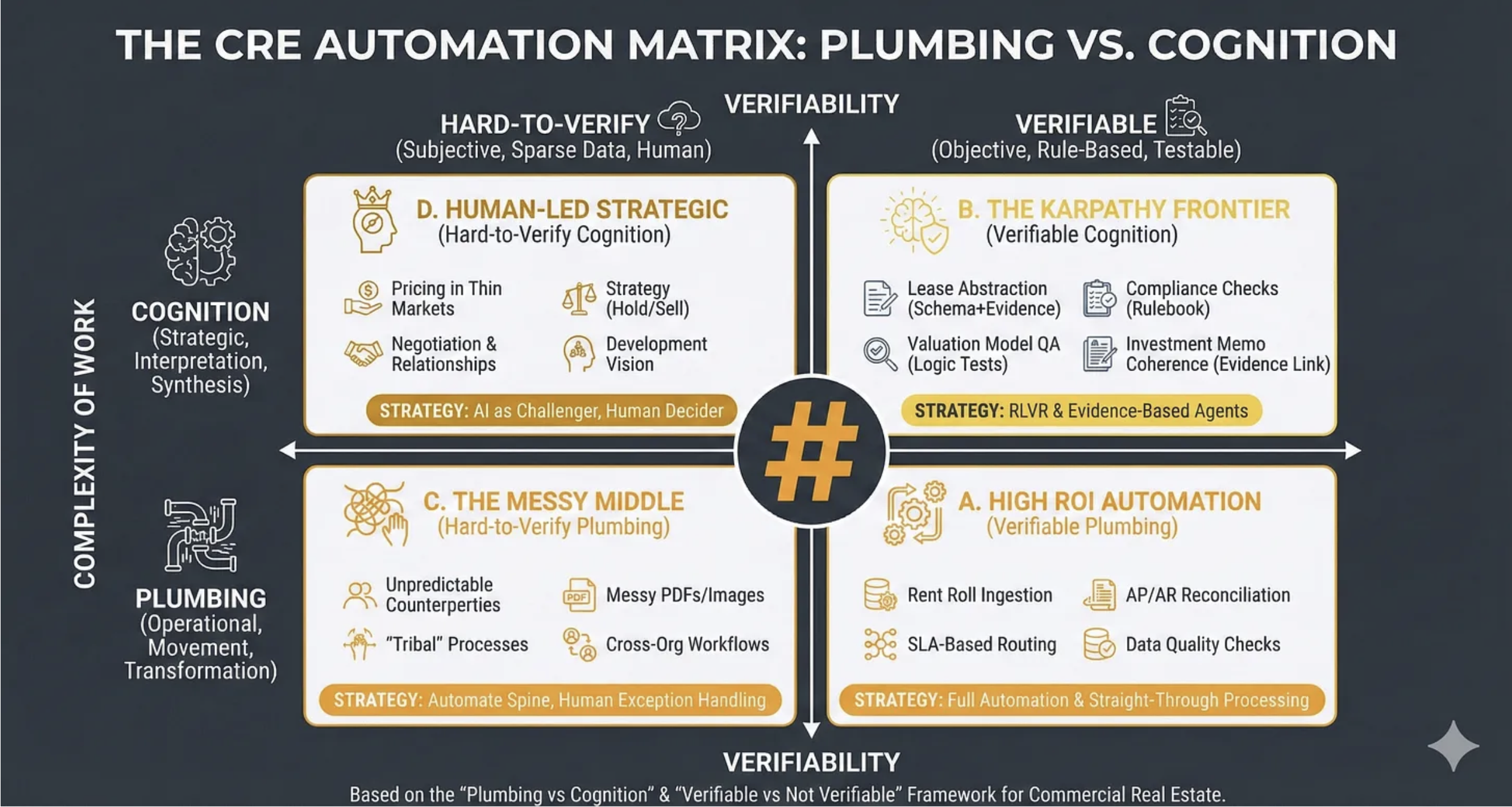

These are the Quadrant A and easier Quadrant B tasks from the RIRA CRE Automation Matrix (see other newsletters). They are where efficiency gains genuinely compound, because every run of a Layer 3 agent is work that no longer requires human time.

The sequencing trap. Most firms try to start here. They identify a painful workflow, commission an AI project to automate it, and build a custom agent before they have Layers 0, 1 or 2 in place. The results are predictable: the agent works in the demo, fails on edge cases, requires constant re-engineering, and ultimately delivers a small fraction of its promised value. Layer 3 only works when it sits on top of clean data, a capable reasoning substrate, and grounded retrieval. Build the foundations first.

LAYER 4: BESPOKE ANALYTICAL AI

And finally, the layer most people imagine is the whole thing.

What it is. Classical machine learning, statistical modelling, time-series forecasting, numerical optimisation, and other specialist techniques, for the problems where the frontier LLM approach is genuinely insufficient. This is the layer most people imagine when they hear ‘AI in real estate’. It is actually the smallest, most selective, and least commonly necessary layer of the stack.

Where Layer 4 is genuinely needed.

CRREM-aligned pathway modelling at portfolio level, where regulatory defensibility and reproducibility genuinely matter

time-series forecasting of operational metrics (energy, footfall, NOI under varied scenarios)

large-scale portfolio optimisation under complex constraints

satellite and drone computer vision for physical due diligence and ESG verification at scale, of the kind Kayrros and the space-intelligence cohort are doing for asset-level transition risk

AML and fraud pattern detection in transaction flows, where false-positive rates need to be tight and the audit trail needs to withstand regulatory scrutiny

graph-native queries across millions of entities with latency constraints that LLMs cannot meet

specialist risk models that require reproducibility and regulatory defensibility

Where Layer 4 is not needed, even though firms often assume it is.

drafting IC memos

summarising deal rooms

flagging unusual lease clauses

checking compliance against a policy document

producing quarterly reports

answering questions about the portfolio in natural language

generating first-draft analysis

All of those are Layer 1 and Layer 2 work. A surprising amount of what is currently branded ‘analytical AI’ in vendor pitches is actually Layer 1 capability dressed up in analytical language. Ask the vendors which layer their product sits in. The good ones will be able to tell you.

A word on the moat question

The most serious institutional objection to everything above is not “but we don’t have the data” or “but this is just chatbots”. It is the moat argument: yes, but Layer 4 is where proprietary advantage lives. Anyone can deploy Claude in a Project. Defensible moats come from specialist models, proprietary data, and analytical depth that competitors cannot replicate. And beside the moat, there is the money argument: Layer 4 is where the prestige fee income sits, where the alpha is meant to live, and where the industry’s quantitative firepower has always been concentrated.

Both arguments deserve a direct answer. In commercial real estate, neither is as strong as it looks.

First, most CRE firms lack the data density that makes serious machine learning worthwhile. Property data is fragmented across PM systems, transaction records and market reports, with limited history, inconsistent taxonomies, and thin comparability across assets. Classical statistics and good old-fashioned data science take you most of the way. ML at scale requires signal depth that most firms simply do not have. The data gap is the reason we have seen so little genuinely predictive AI in real estate despite the tooling having been widely available for a decade. It is not that the industry has been slow to notice. It is that the underlying substrate does not support the ambition.

Second, the share of CRE work-quantum that is predictive-modelling-shaped is small. Rent forecasting, yield prediction and portfolio optimisation matter, but they are a minority of what the industry actually does with its time. The bulk of the work - the reading, extracting, comparing, drafting and reviewing - is Layer 1 and Layer 2 territory. A moat in a small corner of the work is a small moat.

Third, and this is the point that should give any Layer 4 enthusiast pause: there is no Jim Simons of real estate. Renaissance Technologies exists because public equity markets have deep historical data, high liquidity, and genuine arbitrageable inefficiencies. Real estate has none of these at scale. If Layer 4 were where the alpha lived in CRE, someone would have extracted it by now. A decade of widely available ML tooling has not produced a Rentec of property, because the asset class does not support one. This is a structural feature of real estate, not a temporary tooling gap. The assumption that it will change because the models get better is, so far, unsupported by evidence.

Add to this the structural shift of real estate from financial engineering towards operational performance, where the competitive edge is increasingly about running buildings well, serving occupiers well, and reading markets through lived operational contact, and the case that future CRE moats live at Layer 4 gets weaker still. The moats of the next decade are more likely to live in the quality of a firm’s Layer 1 and Layer 2 infrastructure: the depth of its skill library, the calibre of its configured coworkers, the cleanliness of its institutional memory, and the velocity at which it can turn that infrastructure into decisions. That is a different kind of moat. It is also one that more firms can actually build.

How Layer 4 connects to the rest of the stack

Bespoke analytical tools at Layer 4 are called by the reasoning layer, not alongside it. A forecasting model does not produce the IC memo. It produces a forecast. The reasoning layer interprets the output, contextualises it against other evidence, calls additional tools if needed, and drafts the narrative for the analyst. Layer 4 sits as a callable resource inside the Layer 1 substrate, not as a parallel system the analyst has to manually consult and then translate.

SEQUENCING: BUILD FROM THE BOTTOM

A firm starting today should assemble this stack in roughly the following order, with significant overlap between phases.

Months 0–6. Layer 0 work begins and Layer 1 is deployed in parallel. Data hygiene projects start. Projects are set up for current live deals. The firm’s first skills are written, typically covering the two or three most common document-heavy workflows. Initial coworkers are configured. Quick wins start appearing within weeks, not months. This is the phase where the early evidence that the programme is working gets generated, and it matters, because it builds the institutional trust that makes the later phases possible.

Months 3–12. Layer 2 grounded retrieval starts to come online as Layer 0 data becomes usable. Existing knowledge graph assets, if any, are connected. The reasoning substrate begins to reach into the firm’s institutional memory, and the early agents become meaningfully more capable because they have access to firm history rather than just the documents in the current Project. This is where the verifiability story becomes real: every output now has evidence chains back to source material.

Months 9–18. Layer 3 custom agents begin to be commissioned for specific high-value repeatable workflows. These agents are built on top of the Layer 1 substrate and the Layer 2 retrieval fabric, so they inherit the reasoning capability and the grounding infrastructure rather than having to rebuild them. Development effort at this phase is genuine, but it is targeted at specific workflows with clear ROI, not at foundational infrastructure.

Months 18+. Layer 4 bespoke analytical work is commissioned only where specific, identified capability gaps cannot be addressed by the lower layers. Most firms will find that the surface area of genuine Layer 4 need is much smaller than they expected, because Layers 1 through 3 cover most of what they thought they needed specialist analytical AI for.

The critical difference from the AI strategies of five years ago is that this sequence generates value at every stage. A modern deployment generates working capability in the first weeks and compounds from there. The underlying principle (that verifiability, grounding and evidence chains matter) is identical. The path to achieving it is completely different.

WHAT THIS DOES NOT CLAIM

Three honest caveats, before anyone reads more into this than I am actually saying.

It does not claim Layer 1 is sufficient for all use cases. Some firms genuinely need Layer 4 analytical work, and pretending they don’t is as wrong as pretending they need it for everything. The point is that Layer 4 should be a selective, targeted commitment based on specific identified capability gaps, not a default starting point.

It does not claim this sequencing works without Layer 0 data work. The data prerequisite is the most important line in this whole piece. A firm that tries to deploy firm-wide capability on top of chaotic unindexed document storage will produce unreliable output with no audit trail, and the programme will stall within months. Individual practitioners can still make progress on specific deals with specific documents, but the firm-wide compounding does not happen without Layer 0.

It does not claim the human layer is unimportant. None of the architectural sequencing above replaces the need for investment professionals who know how to interpret AI output, challenge it when it is wrong, and make the judgement calls that remain irreducibly human. The entire point of verifiable cognition is that it supports human decision-making rather than replacing it. The governance, cultural and skills work that surrounds the technical stack is at least as important as the stack itself, and arguably more important. Firms that deploy the technology without investing in the human layer end up with sophisticated tools their people don’t know how to use.

AND FINALLY

This is a working artefact. It reflects the current state of frontier AI tooling as of April 2026 and the architectural patterns now emerging as best practice for institutional CRE firms. It will age, some parts faster than others, and should be revisited as the tooling improves.

But the core argument is not going to age. The value in modern CRE AI sits below the predictive layer. It is accessible now. It is layered, and it compounds from the bottom up. The firms that understand that in 2026 will be the firms that still matter in 2030.

You do not need to wait for a model to be trained. You do not need a CTO to green-light a platform. You do not need a data science team. You need to look at your diary this week, identify the five things you do that look like reading, extracting, comparing, drafting or reviewing, and start doing them with Layer 1 tooling tomorrow morning. Open a Project. Drop the documents in. Ask it to do the first task. Twenty minutes, not twenty months.

That is the shortest path from current capability to better capability in commercial real estate. It is not the glamorous path. It is the one that works.

The AI Labs Are Telling Three Different Stories

OpenAI's new policy document is telling investors, regulators and workers three different stories about the future of AI.

OpenAI’s new policy document is telling investors, regulators and workers three different stories about the future of AI. For commercial real estate, the contradictions between them matter more than any individual proposal.

OpenAI published ‘Industrial Policy for the Intelligence Age’ on 6th April. Most of the coverage has focused on the headline proposals: a Public Wealth Fund, four-day-week pilots, adaptive safety nets. All interesting. All missing the underlying point. The frontier AI labs are now telling three different audiences three different stories, and the stories cannot all be true. For those of us in commercial real estate, that contradiction matters more than any individual proposal in the document.

THREE AUDIENCES, THREE STORIES

To investors, the labs are telling a story of massive, durable value capture. The justification for near-trillion-dollar valuations, the hundreds of billions in compute commitments, the talent arms race, none of it works unless the labs themselves capture a huge share of the productivity gains they generate. Investors are being told, in the language of pitch decks rather than policy papers, that frontier AI is a winner-take-most market and that the winners will extract rents at a scale that makes today’s hyperscalers look modest.

To policymakers and the public, the labs are telling a story of broadly shared abundance. Lower costs for essential goods. Scientific breakthroughs reaching ordinary communities. Productivity dividends flowing to workers. A Public Wealth Fund so everyone participates in the upside. This is the OpenAI document. This is the story that makes the regulatory bargain palatable.

To workers and labour markets, the labs are telling a story of disruption-but-also-opportunity. Some jobs go, others emerge, the care economy absorbs the displaced, retraining bridges the gap. It will be hard, but it will be fine. The historical analogies — electricity, the combustion engine, mass production — get wheeled out to reassure.

These three stories cannot simultaneously be true. The arithmetic doesn’t work.

THE ARITHMETIC PROBLEM

If the labs capture the value, there isn’t enough left over for abundance or for new worker opportunities at the scale being promised. The total productivity pie has to be divided. The labs’ investor pitch requires them to take the biggest slice. Everything else is residual.

There’s a deeper problem with the historical analogies. Electricity, the combustion engine and mass production were all complementary to human labour over the long run. They made workers more valuable, not less. Electrification didn’t eliminate factory workers; it changed what they did and made them indispensable. The productivity gains were captured by labour because labour was still needed to operate the new capital. AI’s distinctive feature, the thing that excites investors, is precisely that it substitutes for cognitive labour rather than complementing it. That is the whole pitch. The gains accrue to whoever owns the substitute, not to the workers being substituted for. The labs know this. It’s why they’re proposing capital tax rebalancing and Public Wealth Funds in the first place.

The honest position the labs could take is this: AI will produce enormous abundance, modest aggregate growth, severe distributional consequences, and our business model depends on capturing a disproportionate share of the gains, which is why we’re proposing redistributive policies as the price of social licence. That would be coherent. It would also be unsayable to investors. So instead you get a document that gestures at all three stories simultaneously and hopes nobody does the arithmetic.

If you’re in commercial real estate, you need to do the arithmetic. Each of these stories implies a completely different future for your assets. So does the story none of them is telling.

THE STAIRCASE

Frontier AI capability is moving on a staircase. The leading edge is genuinely expensive and genuinely hard. A handful of labs (Google, OpenAI, Anthropic) sit at the top, burning capital at extraordinary rates to push the frontier forward. They will probably keep doing this, and the frontier itself will probably stay profitable. But only for the narrow slice of use cases that genuinely need bleeding-edge capability. Frontier research. The hardest reasoning problems. Genuinely novel domains. A specialty business, not a civilisation-funding cash cow.

Meanwhile, the cost of year-old capability is dropping 10x per year. GPT-5-class models, then frontier-class models, then whatever comes next: each generation falls down the staircase, gets cheaper, gets smaller, runs locally on commodity hardware (the end-point Apple is banking on), and embeds itself into every productivity tool and business process across the economy. Open-source models like Kimi, DeepSeek and Qwen are trailing the frontier by 6-9 months, which means state-of-the-art becomes nearly free rapidly. At some point in the not-distant future, a one or two year old free model will be more than powerful enough for most things most people will ever need.

This is the semiconductor pattern. Intel made fortunes at the leading edge while last year’s processor became a commodity. The leading edge stayed profitable because it stayed scarce. The commodity layer generated enormous economic value, but very little of it accrued to the chip-makers themselves. Most of it accrued to the firms usingthe chips: Apple, the cloud providers, the entire downstream economy. AI looks to be heading the same way, and heading there faster than the labs’ valuations assume.

A point worth being precise about. The frontier labs are real businesses with real products, real users, real revenue, and real strategic importance. On a net-net basis they are almost certainly a societal good. None of that is in question. The question is whether the valuations price in a winner-take-most outcome that the staircase pattern makes unlikely. Massive demand for AI is not the same thing as massive profit for the labs. You can have a transformative technology, deployed at vast scale, generating enormous economic value, in which the producers of the technology earn perfectly reasonable returns rather than monopoly rents. That is the staircase scenario, and it is the one the current valuations cannot survive.

WHO ACTUALLY LOSES

The mechanism for the loss is already being prepared. OpenAI is reportedly heading for an IPO at a valuation north of a trillion dollars. Anthropic is on a similar trajectory. The current shareholder base is a mixture that will behave very differently when the exit arrives. Founders, employees and early venture capital are natural sellers at peak valuations; that is how the venture model works, and they will take their distributions. Strategic investors like Microsoft and Google are a different case: they are long-term holders with enormous, diversified businesses that will capture downstream value from the staircase whether or not the labs themselves deliver on their direct valuations, which means they are effectively hedged and relatively relaxed about the outcome.

The unhedged position at IPO is the retail one. Index funds buy in because they track the market. Pension savers and ISA holders and 401(k) participants own those index funds. By the time it becomes clear that frontier rents have migrated downstream into the broader corporate economy, the venture capital will already have exited and the strategic investors will be shrugging it off because their cloud divisions are booming. The losers will be the people who own a FTSE or Dow Jones All-World tracker and never knew they were taking a frontier-AI bet at all.

What does this mean for the three stories? The labour disruption is just as real in this scenario as in any other. Substitution still happens. Cognitive workflows still get restructured. The displacement still arrives. But the actual money shows up somewhere completely different from where the labs are pointing. Not concentrated in a few San Francisco companies. Not vanishing into untaxable consumer surplus either. Spread across thousands of mid-cap firms across every sector that successfully restructures around AI-augmented operating models. Their margins go up. Their corporate tax bills go up. The fiscal base grows through entirely normal channels.

In this scenario, you don’t need a ‘robot tax’ of the kind Bill Gates proposed in 2017. You don’t need exotic new instruments to capture rents that aren’t there. You need normal corporate income taxation working at slightly higher effective rates against a broader and faster-growing base. The redistributive arithmetic works without anyone having to invent a new fiscal architecture.

Which makes the OpenAI document’s policy framework worth reading a second time. The document does not argue for the staircase world. It argues for the investor-pitch world, and it proposes policies that would fund the fiscal response to AI disruption by broadening capital taxation across the board rather than by taxing the labs specifically. Read it again with that in mind. The proposals are carefully pointed at the broad capital base: higher capital gains, higher corporate income tax, vaguely-defined ‘measures on sustained AI-driven returns’. What is conspicuously absent is anything targeted at the labs themselves. No compute tax. No inference levy. No windfall tax on lab IPOs. No specific rates, no specific instruments, no specific thresholds. The vagueness is the tell. The document is signalling to investors that profits will be enormous, while simultaneously signalling to regulators that the fiscal response should fall on capital in general rather than on frontier AI in particular. Those two signals look contradictory but they’re not. They converge on exactly the same policy outcome: broad-base capital taxation, which at a group level costs the labs far less than a targeted rent tax would, whether the rents arrive or not.

REAL ESTATE PAYS TWICE

For commercial real estate, the labour disruption is real and you should plan for it regardless of how the fiscal argument resolves. Office demand keeps grinding lower. Bifurcation continues. Prime wins, secondary loses, the middle hollows out. The standard CRE story about AI is roughly right at the operational level, and you should not let the more comforting noises out of the industry trade press lull you into thinking otherwise.

The more interesting question is fiscal. Read the OpenAI document again with real estate specifically in mind. Suppose the investor pitch turns out to be right. Suppose frontier AI really does generate enormous, durable rents at a handful of labs, and governments face the labour-tax erosion the document warns about. What does OpenAI propose as the fiscal response? Broaden capital taxation. Higher capital gains at the top. Higher corporate income tax. Vaguely-defined measures on ‘sustained AI-driven returns’.

Notice what that framework does when applied in practice. Governments looking to broaden capital taxation go first to the largest, most immobile, most politically vulnerable capital base they can find. Commercial real estate scores maximum points on all three. REIT exemptions, capital allowances, the lighter treatment of real estate gains, carried interest on fund structures: every one of these becomes a revenue target the moment the political conversation turns to ‘where do we find the money to replace eroded labour taxes’. The labs are quietly insulated because broad-base taxation applies to everyone equally. Real estate is specifically exposed because its existing tax position is the one that looks like a loophole to the median voter.

Which means the real estate industry has a counter-intuitive interest in the whole argument. If the labs’ investor pitch turns out to be right, CRE is a sitting duck. If the staircase turns out to be right, CRE is mostly fine. I think the staircase is the more likely outcome, with a caveat I want to be explicit about. It rests on the ‘AI as Normal Technology’ hypothesis being broadly correct on timing (the Princeton argument, from Arvind Narayanan and Sayash Kapoor, that AI diffuses slowly through organisations and institutions rather than arriving as an overnight shock), which I’d say is odds-on but not certain, and on the gains ending up genuinely distributed across the corporate economy rather than concentrated in a handful of dominant firms, which is more uncertain still. Realistically we are probably looking at a ten-year transition rather than the three-year one the cheerleaders imply or the three-decade one the ‘AI is just another tool’ sceptics hope for. That still leaves the real estate industry with meaningful dislocation at the operational level. What it does not leave us with, in the staircase world, is a fiscal raid on real estate’s tax position to fund the state’s response to AI disruption.

The point of this piece is that the real estate industry should be actively arguing for the staircase world, and arguing specifically against the OpenAI framework that assumes the investor pitch is right and proposes to fund the fiscal response through broad-base capital taxation. The honest position is that AI should pay for its own disruption. If the labs generate the rents they promise their investors, those rents should be taxed directly - through targeted instruments on compute, inference, or lab equity events - rather than laundered into a generalised attack on capital that conveniently spares the companies causing the disruption while hitting the industries that had nothing to do with it. Bill Gates was right about this in 2017, and he’s more right about it now. The real estate industry should be the loudest voice making that argument, because under the OpenAI framework real estate pays twice: once through lower tenant demand as AI displaces knowledge workers, and again through higher capital taxes levied to fund the state’s response to the displacement it didn’t cause.

The labs have placed their bets, and the bets contradict each other. The real estate industry has the option of placing a more honest one: that AI should pay for AI. That argument is not being made. It should be.

The Smooth Market That Hides the Rupture

A major new forecasting study suggests the macro picture on AI and office demand will look reassuringly calm. That is precisely why it is dangerous.

I have been arguing for some time that the standard approaches to forecasting AI’s impact on office markets are fundamentally broken. Task-decomposition models that score every occupation for AI susceptibility. Macro forecasts that anchor to GDP growth and assume employment follows. Both miss the point. A new paper, serious, rigorous, and authored by researchers you cannot dismiss, has just handed us something more useful: evidence that the real action is not in the aggregate numbers at all. It is in what those numbers conceal.

Executive summary: A landmark study led by researchers at the Federal Reserve Bank of Chicago, Yale, Stanford, and the University of Pennsylvania finds that economists expect meaningful AI progress by 2030 but only modest changes to headline economic indicators. The macro picture looks calm. But the paper’s occupational data tells a different story: the growth of white-collar employment stalls, routine cognitive roles decline, and the junior knowledge worker pipeline is already thinning. For office markets, this means the aggregate demand signal will look stable while building-level outcomes diverge sharply. The edge moves to those who understand their occupiers deeply enough to see the fractures before the market-level data confirms them.

WHAT THE PAPER SAYS

‘Forecasting the Economic Effects of AI’ was published in March 2026 by Ezra Karger, Otto Kuusela, Philip Tetlock, and colleagues across the Federal Reserve Bank of Chicago, the Forecasting Research Institute, Yale, and Stanford. If you recognise Tetlock’s name, you should: he is the foremost authority on expert prediction. This is not a think-piece. It is a carefully designed survey of economists, AI industry professionals, and superforecasters, eliciting quantitative forecasts under explicit AI-progress scenarios.

Two findings matter for our industry. Neither is the headline number.

The first: a clear majority of economists, 61.4%, now assign meaningful probability to moderate or rapid AI capability progress by 2030. This is not a fringe position. Mainstream economists expect AI to advance substantially within five years.

And yet their unconditional economic forecasts barely move. Median GDP growth of 2.5%. Labour force participation drifting gently from 62.6% to 61.0%. Numbers close enough to historical norms that you could glance at them and feel reassured. AI is coming, but the economy absorbs it. Nothing to see here.

The second finding explains why these two things are not contradictory: capability is not adoption. The economists cite organisational inertia, management lag, regulation, infrastructure bottlenecks, and the well-documented pattern of general-purpose technologies taking decades to reshape aggregate outcomes. Even under the rapid scenario, where AI surpasses human performance on most cognitive and physical tasks, experts do not forecast economic outcomes outside historical experience.

If you stopped reading there, you would feel comfortable.

Do not.

WHERE THE RUPTURE HIDES

The paper includes an occupational composition forecast that is, for office markets, the most important chart published this year.

Under the unconditional scenario, the share of white-collar occupations in the labour force continues its gentle decades-long rise: from 20% today to 21% by 2030, 22% by 2050. Steady. Unremarkable. The trend that has underpinned prime office demand for forty years carries on.

Under the rapid AI scenario, that trend stops. White-collar share rises to 21% by 2030 then falls back to 20% by 2050. Not a collapse. A plateau. Meanwhile, care and service occupations grow from 46% to 57%. Blue-collar shrinks to 8%.

The GDP number looks fine in both scenarios. The composition of that apparently stable economy has profoundly shifted: the segment that fills offices has stopped growing, while the segments that do not are expanding. Like the river that looks the same width and depth from the bank while the current underneath has completely changed direction. The surface is calm. The water is doing something different.

The occupation-level data sharpens this further. The roles economists most confidently expect to decline are general and keyboard clerks, clerical support workers, administrative roles: precisely the occupations that fill the middle floors of most office buildings. The roles expected to grow are personal service workers, healthcare professionals, protective services. Valuable work. Not work that drives office leasing.

And then there is the finding that should genuinely unsettle anyone in this industry. Brynjolfsson, Chandar, and Chen’s research, cited prominently in the paper, documents a 13% relative employment decline for workers aged 22-25 in AI-exposed occupations. This is not a forecast. It is already in the data. And the mechanism matters: wages in those roles actually rose. Firms are not replacing everyone with AI. They are using fewer, more experienced workers. The junior knowledge worker pipeline is thinning. That pipeline is where the next generation of office demand comes from.

None of this shows up in the headline GDP number. None of it is visible in the aggregate labour force participation rate. The macro picture is smooth.

The building-level picture is fracturing.

YOU CAN STILL GO BANKRUPT IN A BOOM

This is why I keep returning to the distinction between ‘need’ and ‘want’ in office markets. The macro numbers can look perfectly healthy while individual buildings empty, specific tenants restructure, and the character of space demand shifts underneath you.

If your tenants are in sectors where AI is stripping out routine cognitive work, your occupancy is at risk regardless of what GDP does. If your building serves the kind of process-heavy, desk-intensive work that is concentrating into fewer, more senior hands, you face a structural demand reduction that no market-level forecast will warn you about. And if you are relying on supply constraints as your strategy, you are confusing a short-term tailwind with a structural position. Supply constraints do not protect you when your tenant decides they need 30% less space and upgrades to someone else’s building.

The paper’s most fundamental finding, for our purposes, is its variance decomposition: expert disagreement about AI’s economic effects is driven primarily by different beliefs about how the economy absorbs AI, not by disagreement about whether AI capabilities will advance. Economists who share similar views on the likelihood of rapid progress nevertheless diverge sharply on diffusion speed, job creation offsets, and institutional responses.

Translated for us: the question is not whether AI will be good enough to change work. It already is. The question is how quickly your specific occupiers will reorganise their workflows, restructure their teams, and change the way they use space. And that varies enormously by sector, by firm size, by management culture, by competitive pressure. It is, unavoidably, an occupier-by-occupier question.

The paper’s own data confirms this. There is no relationship between standard AI-exposure scores and economists’ predictions of which occupations will actually grow or shrink. The models are measuring the wrong thing. The right framework is not task decomposition but two variables: how much more productive will AI make this firm’s people, and is demand for their output expanding or flat?

But getting the analytical framework right is only half the answer. The other half is recognising that the thing most owners monitor most closely, whether their tenant can pay, is no longer the thing that matters most.

CREDIT RISK IS NOT SPACE DEMAND RISK

This is where the paper’s findings become operational.

The standard approach to tenant risk in commercial real estate is credit analysis. Can this tenant pay? Are they financially sound? Will they default? These are important questions. They are also, increasingly, the wrong ones.

The Brynjolfsson data cited in the paper shows firms where revenue grows, wages rise, and headcount falls. The tenant is not in trouble. The tenant is thriving. They are simply doing more with fewer people. A financially healthy occupier restructuring its workforce around AI is a bigger threat to your occupancy than a financially stressed tenant who still needs bodies in seats. The break notice will arrive from a position of strength, not weakness.

This is the decoupling that macro forecasts cannot see and credit analysis will not catch. Your tenant’s balance sheet looks fine. Their space requirement is shrinking.

Those two variables, productivity and growth, are what determine whether that shrinkage is coming. They interact to produce radically different space outcomes. A firm where AI drives a 40% productivity gain but output grows only 10% faces a 22% space reduction. The same productivity gain in a firm whose output doubles produces a 43% expansion. The question is not how much AI can automate. It is whether productivity gains get absorbed by growth or converted into headcount reduction.

This paper confirms that logic directly. Its occupational composition data shows exactly the pattern you would expect: under the rapid AI scenario, white-collar employment share plateaus while the economy continues to grow. Productivity is rising. Growth is absorbing some of it. Not all of it. And the segment that fills offices is the segment where the absorption is weakest. For lower-growth economies, the arithmetic is harder still: less growth to absorb the productivity gains means more of those gains convert directly into headcount reduction.

Which means every owner needs to be able to position their tenants on that productivity-growth matrix, not once, in a quarterly review, but continuously. And the signals to do so are largely available right now.

THE INTELLIGENCE YOU ALREADY HAVE

Think about what is knowable about your tenants today.

At the most basic level, and this is work AI can do systematically, you can track the observable indicators. Headcount trends versus revenue trends. Junior-to-senior hiring ratios. Job postings in AI-exposed roles. Earnings call language around operational efficiency and workflow redesign. Sector-level AI adoption patterns. These tell you where a tenant sits on the productivity axis: are they absorbing AI aggressively or gradually? And they tell you something about growth: is demand for what this firm does expanding or contracting?

A step beyond that, you can track what is inferable with effort. Technology partnerships. Subletting activity in their sector. Whether they are investing in their fit-out or letting it run down. Competitive dynamics that might force efficiency regardless of management intent. This requires combining structured and unstructured information, AI-assisted but human-directed.

And then there is the intelligence that only comes from being present. How the tenant talks about their space when they are not negotiating. What the facilities manager mentions in passing. The half-empty floor that does not show up in any dataset. This is what I have called the unmeasurable, the relationship intelligence that automated monitoring will quietly degrade if you do not deliberately protect it.

Each layer feeds the same assessment: where does this occupier sit on the productivity-growth matrix, and is that position changing? The first layer tells you the baseline. The second sharpens it. The third is where you catch the signals the data misses, and it is, not coincidentally, where defensible competitive advantage lives. Everyone will have automated monitoring within two years. Not everyone will have asset managers who know their tenants well enough to read what the monitoring cannot see.

THIS IS NOT A TECHNOLOGY PROBLEM

The temptation is to treat this as a systems challenge: build a platform, integrate the data feeds, deploy the dashboards. That framing is wrong, or at least insufficient. The binding constraint is not technology. It is whether your people think about their tenants this way at all.

A fifteen-person property company with AI-fluent asset managers who routinely run earnings transcripts through an LLM, track hiring patterns against their tenant register, and ask pointed questions at every tenant meeting will see the fractures forming before a large institutional manager whose teams are still assembling quarterly reports by hand. This is cognitive infrastructure, not technology infrastructure. It reflects how people think, not what systems they have.

The minimum viable version is not a platform. It is a question that every asset manager asks at every tenant interaction: how is AI changing how your people work? And then a discipline for connecting the answer to what it means for space.

The sophisticated version builds from there. But the starting point is a shift in what you pay attention to, from whether your tenant can pay the rent to whether your tenant will still want the space.

The market will look fine. Your building might not. The only way to know the difference is to understand your occupiers better than any forecast model ever could.

Not: will this asset perform well? Rather: will this asset’s occupiers do well, and will doing well still mean needing this space?

That is the question now. And it is answerable, if you are willing to look.

The End of Architecture as We Know It

AI and the Built Environment — an honest reckoning

This week we have a special newsletter. Together with Sandeep Ahuja - co-founder of AI-native Architecture practice cove - we’ve written up our thoughts on how the Architecture profession needs to think about AI.

Every profession, when threatened by technology, reaches for the same two arguments. First: this tool cannot do what we do — our work is too complex, too contextual, too human. Second: even if it can, we will always be needed. Architecture has been reaching for both. Neither is quite wrong. Neither is anywhere near enough.

The honest conversation — the one that is harder to have — is not about whether architects survive. It is about what the profession actually is when the production layer underneath it becomes cheap, fast, and largely automated. What is left when the work that consumed most of the hours is no longer where the value lives? What does the architect become, and what does the built environment gain or lose in the process

Between us, we have been watching this intersection of technology and real estate for a long time — one from the vantage of three decades in PropTech and real estate strategy, the other from building AI systems that are actively redesigning how architecture gets done. We do not agree on every detail of how this plays out. But we agree that the profession deserves a more honest account of what is coming than either its defenders or its critics have been willing to give it.

The wrong question

The industry keeps asking whether AI will replace architects. It generates more heat than light. A more useful question is: what share of what architecture currently charges for will, within five years, not be worth paying for at current rates — and what becomes newly possible and newly valuable in its place?

When you ask it that way, the answers get uncomfortable fast. AI does not replace the architect. But it replaces, comprehensively and at accelerating speed, the work that the majority of the profession’s workforce has historically been paid to do: translating concepts into documentation, applying codes to floor plans, generating massing options, iterating through compliance across building types and jurisdictions. For most firms doing volume residential and commercial work, this is not the margin of the profession. It is the core.

Dario Amodei, CEO of Anthropic, argued in his January 2026 essay on AI risk that we are compressing what would historically be a century of economic evolution into five to ten years. His warning was about white-collar work broadly. It applies with specific force here. The institutional protections architecture has always relied on — licensure, liability, the genuine complexity of construction — protect the licensed professional at the moment of signing. They do not protect the entire workforce that existed to support that professional, and they do not protect the billing model that assumed that workforce would always be necessary.

What the licensed professional actually becomes

Here is where the conversation in the profession tends to go wrong. The assumption, often unstated, is that as AI handles more of the production, the architect’s role shrinks toward oversight — a reviewer of machine output, a checker, a last pair of eyes before the stamp. That is not only insufficient as a vision. It is the wrong model entirely.

The licensed professional in an AI-native practice is not a quality controller. They are the director. They set the vision, define the constraints that matter, make the judgment calls that cannot be encoded — and then deploy AI to execute against those decisions with a speed and thoroughness that no traditional team could match. The work expands in ambition because the cost of iteration collapses. A principal who once had to ration design exploration because every option cost weeks of human time can now ask genuinely harder questions: What does this site want to be? What does this community actually need? What happens if we challenge the brief entirely? The machine runs the scenarios. The architect decides what they mean.

And this is where something that has always been central to great architecture — but rarely named directly — becomes the defining professional asset. Taste. When AI can generate a hundred viable options where a conventional process produced three, the critical act is no longer generation. It is selection and direction. It is knowing which of those options is not just technically correct or financially optimised, but genuinely right — for the place, for the people, for the cultural moment. Steve Jobs once said the problem with certain technology companies was that they had no taste — that they could execute without any sense of what was worth executing. Architecture has always depended on taste at the highest level. AI does not diminish that. It raises the stakes for it, because taste is now the thing that cannot be automated and increasingly cannot be hidden behind production.

This also changes what the stamp represents. When AI produces the documentation, the architect who signs it is asserting professional judgment over a system they directed and a process they are accountable for understanding. That is a more demanding standard of accountability, not a lighter one. The firms that grasp this are building rigorous human-in-the-loop workflows that reflect it. The ones that do not are the ones who will find the liability they thought AI was absorbing lands squarely back on them.

“When AI can generate a hundred options, the critical act is no longer generation. It is knowing which one is genuinely right. That is taste - and it is profoundly, irreducibly human.”

Where the value rebundles

AI removes scarcity of time and cognitive bandwidth. The analysis that took weeks takes minutes. The iteration that required a team of four requires one. But it introduces new constraints in place of the ones it removes: verifiability, governance, accountability for decisions made at machine speed. The contested urban site, the complex adaptive reuse, the project where a misjudgement is fixed in concrete for fifty years — these demand human direction not because AI lacks capability, but because the consequences are irreversible and the judgment calls genuinely cannot be automated. That is where the profession’s value has always lived at its most serious. AI does not erode it. It clarifies it, by removing everything else.

What this also does, on the engineering side, is worth naming clearly. A large share of structural and MEP work is structured, rule-governed production: modelling, detailing, documentation, coordination. That layer is largely automatable. What remains is systems thinking — understanding how one design decision constrains every other intertwined system, and making judgment calls when they conflict. That is a smaller slice of current engineering hours than the profession would like to admit. It is also the highest-value slice, and it is squarely human.

The firms that will navigate this are already examining their workflows with enough rigour to understand what belongs to each category. The ones that are not are making a strategic choice by default — and the window to make it deliberately is narrowing.

The question the profession is not asking

There is a significant upside to this shift that tends to get buried under the anxiety, but it comes with an honest question attached.

Most of the built environment has never been able to afford serious architectural services. The small commercial conversion, the affordable housing development, the institutional building sitting on obsolete space that no developer will touch because the economics do not pencil — these projects exist in a world where design quality has always been rationed by cost. AI does not just compress margins in the existing market. It makes it economically viable to bring genuine design intelligence — and genuine taste — to clients and communities that could never access it before.

But the harder version of this question is: when design costs fall significantly, who captures the savings? If the answer is primarily developers optimising yield, the built environment gets faster and cheaper without necessarily getting better for the people who occupy it. The profession has an opportunity here that it will only realise if it is deliberate — using the efficiency gains to take on more complex, more community-oriented, more ambitious work, rather than simply racing to the bottom on fee. That choice does not make itself.

“AI makes it viable to bring real design intelligence to places that could never afford it. Whether that benefit reaches communities or just margins is a choice the profession still gets to make.”

What to do with the uncertainty

Amodei has also said: “We, as the producers of this technology, have a duty and an obligation to be honest about what is coming.” We think that obligation extends to anyone with a clear view into what is changing in the built environment. The profession deserves honesty, not comfort.

The profession does not end. But the profession as it has existed — with its particular workforce, its particular economic logic, its particular model of where value sits — is being restructured around a different centre of gravity. The architect as production manager gives way to the architect as director: smaller teams, higher ambition, clearer accountability, and for the first time the computational firepower to actually match the scale of what the built environment needs.

That is not a diminished profession. It is, if the transition is navigated with honesty and intention, a more powerful one — defined not by how much it can produce, but by how well it can choose.

The Dashboard Made Them Blind

Why workflow-level decomposition is the difference between AI that transforms and AI that quietly degrades

Most of what companies call an AI strategy is actually just a shopping list. Tools to evaluate, pilots to run, use cases to ‘explore.’ Which is fine, as far as it goes. It just does not get you very far. Most strategies quietly die in the gap between “we should use AI for asset management” and knowing what to build, where the human remains vital, and how the two combine to produce something genuinely better. This piece tries to close that gap.

EXECUTIVE SUMMARY

A credible AI roadmap starts by decomposing workflows to the task level. Each task then needs to be assessed through four questions: what constraints AI removes, what new ones it introduces, where value emerges across three horizons, and how the work should be redesigned so human and machine compound rather than compete. This piece applies that process to a single workflow: threat identification in an office REIT’s asset management cycle. The findings challenge assumptions: the same task can sit in different places on the CRE Automation Matrix depending on jurisdiction and data quality. Automating the measurable can degrade your capability in the unmeasurable. And the genuinely transformative horizon (H3) isn’t a better dashboard: it’s a different operating model for the function itself.

_______________

This essay pulls together several frameworks I’ve unpacked in recent newsletters - particularly RIRA, the CRE Automation Matrix, and the Prompting Framework - and applies them in detail to a single real estate workflow.

_______________

THE WORKFLOW

Over the past few months I’ve introduced a framework stack for creating value with AI in CRE: RIRA for strategy, the CRE Automation Matrix for analysis, and the Prompting Framework for execution. Together they answer three questions: how are we creating value, what kind of work is this, and how do we get it done?

This piece takes those frameworks off the shelf and applies them to a real workflow at the level of granularity a roadmap actually requires. The aim is not to be cheaper or faster, though you will be both. The aim is to be demonstrably, verifiably better: to produce a quality of output the traditional operating model cannot match. That requires surgical decomposition - seeing exactly where to push hard on the AI and where to optimise the human. Neither happens without genuine system redesign.

Take a hypothetical mid-cap European office REIT. 45 assets across the UK, Germany, and the Netherlands. Property management outsourced. Asset management team of twelve.

The asset manager’s recurring job is to answer four questions continuously:

How is income performing versus plan?

What is threatening that income?

What interventions will protect or grow it?

What needs to happen next?

We’re going to take the second, threat identification, and apply the RIRA process. I’ve chosen this because it’s where the Automation Matrix classification is most contested, and where getting the human-machine boundary wrong creates real financial risk rather than just inefficiency.

Here’s what every asset manager knows but rarely says out loud: they are doing seven fundamentally different jobs simultaneously when they identify threats to income. And what RIRA reveals, when you decompose properly, is that those seven jobs need seven different human-machine configurations.

SEVEN JOBS, SEVEN DIFFERENT PROBLEMS

1. Lease event surveillance. 300+ breaks, expiries, rent reviews, and options in a rolling 24-month window. Knowing not just when they are, but which ones bite: a small lease that’s individually immaterial but leaves an entire floor vacant.

2. Tenant financial monitoring. Payment patterns, public financials, sector stress, balance sheet signals. Ranges from the trivially observable to the analytically complex.

3. Relationship intelligence. Tenant conversations, building walkthroughs, broker networks, the PM’s building manager mentioning something over coffee. None of this is in any database. For experienced asset managers, it is frequently the earliest threat signal.

4. Market threat assessment. Supply pipeline, rental direction, demand shifts, regulatory risk. The data exists; the interpretation of what it means for this specific building does not.

5. Physical and regulatory risk. EPC deadlines are rules-based and trackable. Building condition data is fragmented across PDFs, BMS systems, and within property managers’ heads.

6. Valuation trajectory. Forming a view on whether the external valuer will write an asset down. Which requires understanding not just the comparables but how the specific valuer thinks.

7. Synthesis. Pulling 1–6 together, reading the interactions between them, deciding what to escalate. This is where the experienced asset manager earns their salary.

The instinct, the one most AI strategies follow, is to scan this list and classify: automate surveillance, partially automate credit monitoring, leave valuation judgement alone. That classification would be right at such a crude level that it’s dangerous.

RELEASE: WHAT CHANGES AND WHAT GETS HARDER

Before deciding what to automate, you need to understand both the constraints AI removes and the ones it introduces.

RIRA starts with Release: map the constraints AI removes and the new ones it introduces. This is where the strategic picture forms.

What opens up:

The surveillance bandwidth constraint disappears. An asset manager with five multi-let buildings triages by rent quantum. Big tenants get attention; small ones get reviewed when something goes wrong. AI removes this: every event monitored continuously, regardless of size. This matters because the damaging event isn’t always the biggest tenant’s break. Sometimes it’s three small tenants all exercising in the same quarter, triggered by the same market dynamic, that nobody spotted as a pattern because each was individually immaterial.

Evidence assembly collapses from hours to minutes. Portfolio-level pattern recognition becomes possible for the first time: correlated threats across assets that no individual has the bandwidth to see. Multi-scenario analysis per asset per quarter becomes routine instead of exceptional.

What gets harder:

The false negative problem. This deserves to be treated as a first-order design constraint, not a risk to manage after the fact. Automated surveillance monitors what it’s configured to monitor. New threat types - a planning authority changing designations, a shift in corporate occupier strategy, a regulatory change not yet enacted - won’t trigger alerts because nobody anticipated them when the rules were written.

Consider what has actually destroyed significant value in institutional office portfolios recently: COVID, the energy price spike, the acceleration of distributed working, regulatory tightening on Energy Star or EPCs. A monitoring system designed the year before any of these would not have caught them. A good asset manager, reading the market and talking to tenants, might have.

Then there’s automation-induced complacency. Almost every technology tool introduced into asset management over the past 15 years has exhibited the same pattern: the tool replaces attention rather than supplementing it, because people are busy and the tool gives them permission to stop doing the effortful work. Automated rent tracking was introduced; people stopped walking floors. Dashboards appeared; people stopped reading detailed PM reports.

The uncomfortable implication of this is that the better automated surveillance works for known threats, the more it can degrade your capability for unknown ones. Not fixable by better AI. This is a human behaviour problem that the Redesign phase of RIRA has to address directly.

Add the verification gap - who checks the AI-assembled threat picture when the asset manager’s implicit quality control came from assembling it themselves? And the PM data governance question - were those outsourced PM contracts even written to contemplate automated analysis? With second-order consequences like these, the constraints-introduced side of the ledger is at least as consequential as the constraints-removed side.

THE AUTOMATION MATRIX: WHERE CLASSIFICATION GETS INTERESTING

The mistake is to treat a workflow as one kind of work. It rarely is.

With constraints mapped, you classify each task on the CRE Automation Matrix: what kind of work is this, and how verifiable is it? The generic classification - lease surveillance is Quadrant A, tenant credit is Quadrant B, valuation is Quadrant D - looks clean.

But it’s wrong in three ways that matter.

The same task sits in a different quadrant depending on jurisdiction. Lease event surveillance for well-abstracted UK leases with verified data is Quadrant A: rules-based, testable, automatable. But German commercial leases operate under BGB provisions with fundamentally different mechanics, from how break rights and rent adjustments work to how formal documentation requirements apply. Even after the 2025 reform that relaxed the old Schriftform written-form standard, new complexities have emerged around documentation and the legal status of informal agreements. Dutch office leases under Article 7:230a of the Civil Code have their own distinct framework again: different renewal and termination mechanics, a critical classification question about which statutory regime applies, and materially different consequences depending on the answer.

One workflow. Three markets. Three different quadrant positions. Build a system for the UK classification and deploy it uniformly: reliable for English leases, potentially dangerous elsewhere. The point generalises beyond these three jurisdictions: wherever you operate across borders, the automation profile is jurisdiction-specific. Local knowledge isn’t a nice-to-have. It’s a structural requirement.

The same task shifts quadrant depending on data quality. Tenant credit analysis is Quadrant B where data is clean: public financials, credit ratings, structured sector data. For privately held Continental European tenants where public data is limited or delayed, the same analysis moves into hard-to-verify territory. The evidence base isn’t there. You’re relying on relationship intelligence, not structured data.

Automating the measurable degrades your capability in the unmeasurable. This is the finding that matters most. Build a tenant credit dashboard. It monitors payment patterns and public financials well. It generates confidence. And because the dashboard is ‘handling’ tenant risk, the pressure on asset managers to maintain their tenant relationships - the conversations, the site visits, the reading of signals - quietly diminishes. People are busy. The dashboard gives them permission to stop.

But the tenant about to exercise a break doesn’t always show up in the financials. They show up in how they’ve stopped investing in their fit-out, the half-empty floors, the facilities manager who mentions in passing that the company is looking at options. That intelligence comes from being present. If you’re not present because the system is ‘handling it,’ you’ve automated yourself into a blind spot.

THREE HORIZONS: FROM FASTER TO FUNDAMENTALLY DIFFERENT

Once the task types are clear, the question becomes not just what gets cheaper, but what becomes possible.

H1: the efficiency gains. Automated calendars, payment dashboards, market data assembly, compliance trackers. Worth doing immediately. Table stakes within two years. Same picture, faster. Every REIT will build these.

H2: the capability upgrade. Evidence-linked threat assessments: every claim traced to source - the lease clause, the comparables with adjustment logic, the payment history, the sector outlook. The asset manager doesn’t assemble the picture; they evaluate it. A fundamentally different use of their time.

Portfolio-level pattern detection: a capability that doesn’t exist today in any form, even manual. The system surfaces correlated threats that no individual can see. The portfolio committee conversation shifts from “tell me about your buildings” to “here are the portfolio-level patterns - which do we act on?”

These are frontier capabilities - cognitive work made verifiable through evidence engineering. Hard to build. Hard to replicate. Where defensible competitive advantage lives.

H3: the transformation. Now apply the H3 Provocation Framework - the questions designed to push past efficiency and capability into genuine structural change.

What becomes free, and whose business breaks? The binding constraint in threat identification is the asset manager’s cognitive bandwidth: deep monitoring of 3–5 assets, not more. If AI removes the surveillance and evidence assembly constraints, a senior AM can maybe oversee 8–10. The relationship layer sets the ceiling - you can’t maintain deep tenant intelligence across fifteen buildings - but it rises meaningfully. Whose business model depends on the current bandwidth constraint? Every outsourced AM provider whose fee assumes the current ratio of human attention per asset.